Getting AI Back on Track

“Altman

admits scaling isn’t the answer” - how long do we have to wait until Altman

admits LLMs aren’t the answer? What it shows is that a large percentage of people

working in Computer Science have no understanding of what they are doing when

reading or writing. How can that be – they can write programs. The symbols used

in programs have a single meaning, so no confusion. But a specification is

different – it uses words, and many words have multiple meanings. How do they handle

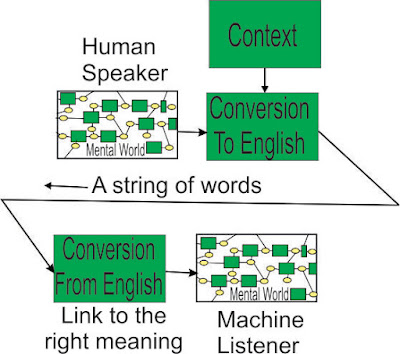

this? Humans have a very limited bandwidth, so most of the parsing is done

unconsciously, leaving enough bandwidth to understand the meaning of the

message. This is how they can be fooled into thinking an LLM is “reading” the

text, when it is doing no such thing.

An

example:

A high jump

frame, over which competitors have to jump. “Raing the bar” means increasing

its height, increasing the difficulty of jumping over it. “Raising the bar” can

be used as a figurative allusion, meaning something is harder to do.

Taiwan

Semiconductor raised the bar on track widths by using far ultraviolet as the

light source, making 2 nm widths possible.

The meaning

is clear.

Another

example:

Fred raised (the

subject of) the bar on forever chemicals in drinking water at the meeting,

saying the benefits did not justify the costs.

Raising a

subject is a perfectly valid use of words, even when the subject happens to be

a bar on something. The parson’s unconscious parsing will apply the correct

meaning – the LLM has no such luck. To make matters worse, the LLM only uses

words up to this point to guide it on the next word, whereas a human will use

the words after the next word to provide context. The Simple Simon approach to extracting

meaning from text shows how limited LLMs are – in complex text it may take many

pages to establish the meaning being created by a few words (they may not be

next to each other).

What this

demonstrates is the lack of ability of many people in Computer Science to

understand reading and writing. Computer Science will carry this stain of

foolishness or poverty of training for a long time – probably better to find a new

standard – SAI Science (Semantic AI) perhaps.

At the sight

of so many suckers eager to be fleeced, the large consultancies threw away

their ethics and “went for it”. Getting AI back on track will be long and

arduous.

Comments

Post a Comment