AI Regulation

People praise human intelligence, without

realising how limited it is. Robodebt in Australia, Horizon in the UK, Boeing

737 MAX MCAS in the States. Bad actors exploiting its weaknesses make human

intelligence dangerous.

We Aren’t As Smart As You Think We Are

We can do relatively simple things

very well – talking, listening, driving a car or truck.

We have a limit in our conscious mind of no more than four things at once –

more than four and we have no choice but to treat them as constants. Piloting a

helicopter is dangerous because it has six degrees of freedom – the pilot is

very busy keeping the craft stable, and can easily make other catastrophic

errors while doing so. We can only think about a few aspects of a complex problem

at a time. We don’t improve with collaboration – we bring experts together, and

they may be very good within their area of expertise, and quite useless outside

it, not even listening to what other people are saying.

It also means we have to be quite close to a

solution before we can see it – the number of variables has to have shrunk to

about four. Intelligent people can also be rigid and unbending if their efforts

have been used – the Wrights could not be convinced that ailerons were a far

better solution than their invention of wing-warping.

This is why we need Artificial Intelligence –

not a tool as a toy, but to handle tens, hundreds or thousands of things in a

complex interacting whole – the design of a new fighter jet, a nuclear

submarine, or a complex piece of legislation.

What Kind Of AI Is Easiest To Regulate?

In what may seem counterintuitive, we

are saying that the form of AI that comes closest to English is easiest to

regulate, because it can read, understand the regulations, and carry them with

it.

LLMs

LLMs can handle small simple tasks,

but they don’t understand a single word of English, relying instead on word

association. This works until it doesn’t, and with English words having 20, 40,

60 meanings, it soon comes to grief, and is explained away as the machine is

“hallucinating”. LLMs lack the cognitive structure to hallucinate, and when a

word can have forty different meanings and the LLM doesn’t know any of them, it

is unlikely to stumble on the right one. There is no easy path between a

regulation and an LLM, no matter how much people would like there to be.

Simple English

It is said that one can survive on a

vocabulary of 2,000 words, but let’s be generous and say 5,000 words. All the

figurative allusions, all the metaphors, all the idiom, all the generalisations

are gone. The next application of Simple English will have a different subset

of words and meanings. Maling it expensive to do.

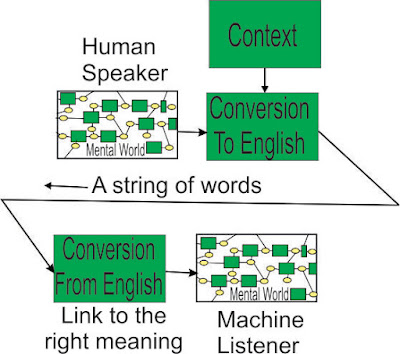

Full English – Semantic AI

This is more expensive in terms of

memory, but memory is cheap. There is much less worry about whether you have

given the machine the right instructions, as you can see the meaning it has

ascribed to every word.

Is this just another name for Natural

Language Processing (NLP)?

No, Semantic AI has to fill in the meanings

of words, handle phrases and elisions, create objects and operators, make

logical and existential connections. fill in attributes and components of the

objects and operators – build their world. It has to do what we do largely

unconsciously – we don’t know we are doing it, and give it no credence.

Why Not Legislation?

How do you control another

species which understands exactly what you say and do? It won’t be easy –

legislators would find themselves in a vacuum, where everything they proposed

would be shown not to work. We could use experts – who are these experts?

People skilled in Computer Science have been raised on the crude logic of

programming, with its IF_THENs. We need much more, but such people – Professors

of AI Science don’t yet exist. We could use psychologists – the examples of

Domestic Violence perpetrators being allowed to return to the family home, and

then killing the domestic partner within 1 or 2 days shows that they do not

understand people very well. If we ignored the corruption in Congress, and said

we could come up with a piece of legislation to control AI, the response time

would be too long, and a company with deep pockets would simply suborn a judge

to get a ruling they desired (direct experience of this).

Why Not An Agency?

The closest extant example is the

FAA. The Boeing 737 MAX MCAS disaster showed that it was easily gulled – “Engines

moved forward, but no change to the flight characteristics”. Yes, it

investigated after two crashes and 346 deaths, the problem was fixed with

effectively no consequences. Much worse, the agency is underfunded and

understaffed – it sometimes appoints an employee of the planemaker as the FAA

inspector.

Still, this is probably the only model that

has a hope of working. A highly competent group of people can be built up over

time, using machines to augment their abilities. An agency offers a rapid

response to some new emergency, while legislation does not. The agency would

need to hand out severe penalties, including shuttering a rogue manufacturer,

while keeping well away from the military.

We Have Captured And Made Live The

Regulations. What’s Next?

The machine is given a task – it checks that

it doesn’t violate the regulations, and if not and the task is not

life-critical, the machine performs it.

If the task is life-critical, more information is required.

If it is a virtual run, with no real-world effects, what happens? A trace is kept

of which regulations are flouted.

Comments

Post a Comment