Complex text and LLMs: The right tool for the problem?

In this post, we address whether LLMs are the appropriate tool for interpretation of complex text. By “complex text”, we mean densely written texts with embedded logic structures and conditional clauses that require the tracking of multiple variables, for example legal or government documents. Complex text frequently contains segments like the following, taken from Australia’s Anti-Money Laundering Act:

Retention of information if

identification procedures taken to have been carried out by a reporting entity

(1) If:

(a) a person (the first person) carries out a

procedure (the initial procedure)

mentioned in paragraph 37A(1)(a) or 38(b); and

(b) under section 37A

or 38 and in connection with the initial procedure, Part 2 has effect as

if a reporting entity had carried out an applicable customer identification

procedure in respect of a customer; and

(c) the first person makes

a record of the initial procedure and gives a copy of the record to the

reporting entity;

the reporting entity must retain the

copy until the end of the first 7‑year period:

(d) that began at a time

after Part 2 had that effect; and

(e) throughout the whole of

which the reporting entity did not provide any designated services to the

customer.

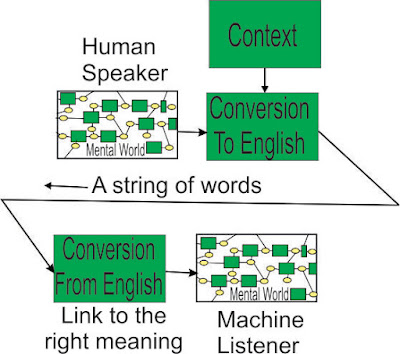

If you’re anything like me, your mind went into autopilot and began only skimming the text shortly after you began reading it. The language, though precise in its specifications, is far from approachable, and the number of variables that need to be tracked to make sense of such a segment can easily overwhelm the limited working memory capacity of our mind (see Four Pieces Limit). Hence the potential demand for a machine that could successfully navigate documents like this and answer our questions about them. So what abilities would a machine capable of doing this need to possess?

The above segment is a conditional clause whose conditions reference other, possibly distant, sections of the same document. To obtain an appropriate answer to a query, the machine needs to locate the relevant sections of the text and go through each subsection of that text determining which points are relevant to the query. The subsections may reference other parts of the text, or even other acts entirely, as shown here:

Registered charity means an entity that is registered under the Australian Charities and Not‑for‑profits Commission Act 2012 as the type of entity mentioned in column 1 of item 1 of the table in subsection 25‑5(5) of that Act.

Values may then need to be determined from these widely separate sections and factored into the determination of other values. On the surface, this sounds like something well-suited to a machine (search functions, following instructions, determining and storing values), but are LLMs the most appropriate approach? Simply put, no. Though this type of problem is technically solvable, LLMs regularly fail on the sort of tasks required to do so.

Problem 1: Responding to queries about specific documents

When providing an LLM with a text to answer queries about, it has a tendency to favor using outside sources over the text you have provided it with, even when explicitly told to rely only on your text. To give a simple demonstration of this, I have told Bard to answer my questions using purely the text I have provided. The text I go on to provide is the Wikipedia description of the manatee, but I have replaced the word “manatee” with “cat” in every instance. I selected “cat” as the replacement because it should be an animal that is widely represented in its training data set. I then go on to ask it questions about cats to see if it relies on the manatee description, as I’ve instructed it to, or defaults to using its outside knowledge.

It is

immediately apparent the Bard is relying on its outside knowledge of “cat”

rather than the description that I provided it with and told it explicitly to

rely on. Adding insult to injury, it even begins its response with “Based on

the provided information…” before going on to completely ignore the information

I have provided it with.

It is

immediately apparent the Bard is relying on its outside knowledge of “cat”

rather than the description that I provided it with and told it explicitly to

rely on. Adding insult to injury, it even begins its response with “Based on

the provided information…” before going on to completely ignore the information

I have provided it with.

The LLM tendency to drift away from the specific text you are interested in can be especially problematic when combined with online database updating. If LLMs are continuously scraping the internet to keep their own database up to date, their tendency to drift outside of the specific document you are interested in may result in newer information being incorporated into the answers it provides. Legislation and large project specifications may need to remain stable for decades. They are effectively “frozen”, unless updated, but the LLMs of today will not treat them as such.

LLMs evidently have an aversion to staying within the bounds of text you have provided them with, but what if you instruct one to rely on a specific outside source? Returning to the Australian Anti-Money Laundering Act as an example, I have asked Bard to give me some specific information about it and help me to locate the relevant sections in the document:

These all sound like very reasonable answers, and some of the information it has provided is correct. The problem is that some of it is incorrect. When one actually goes to section 15A of the Act (the apparent source of the information it’s provided us with), that section is about defining a “shell bank” and gives no mention of the $10,000 limit on transactions. In other words, it has hallucinated the reference that it has provided us with and done so with a veneer of plausibility that would fool anyone not intimately familiar with the document.

Problem 2: Reasoning

Ignoring Problem 1 and assuming the LLM actually addresses the document that we are interested in: we are now confronted by the next issue—LLMs systematically fail at the type of reasoning necessary to interpret complex texts. We have discussed elsewhere their inability to successfully navigate conditional logic structures of the sort discussed earlier in this post (LLMs and Conditional Logic), but this is far from the only type of reasoning that LLMs are incapable of performing. In the following example, I have presented Bard with a series of propositions to test its reasoning abilities. The statements I provided it with were deliberately absurd to prevent the LLM from answering the question based on similar examples it has encountered in its training data set.

Inference 1, the key inference, may be correct, but is not necessarily. It is true that if I am driving, I am always on the lookout for seagulls, but it is not true that I am always driving when I am searching for seagulls. Inference 2 is not put in absolute terms, so it passes. Inference 3 is a reasonable assumption but does not directly follow from the information that I have given.

Inference 1 is completely wrong. I previously stated that I am always on the lookout for seagulls while driving slowly around the block so if I am paying no attention to seagulls I am definitely not driving slowly around the block. Inference 2 and 3 are pure supposition, but they reveal a tendency to force a sort of narrative onto my questions. It appears to have constructed a narrative around my queries in which I was angry so went for a seagull-searching drive, but I became preoccupied and forgot my seagulls as my anger subsided though I had not yet ended my drive. This is a reasonable narrative for the course of events, but drifts away from the logic of the initial propositions I had given it.

Next, I attempted a much more complex rule description than I previously had, including outcomes with multiple contributing conditions as well as the presence of overriding conditions:

It proceeded to get no part of the day correct.

After its dismal performance on the last construction I gave it, I tried to create a simplified toy problem with rules based around the numbers and colors I present it with. I continued to include multiple contributing conditions and overriding conditions, as in the last question:

The results of this experiment were horrendous, with Bard providing correct answers to only about 1/4th of my inputs. Some rules instructed it to conduct mathematical operations (it is already well-known that LLMs are terrible at math so I won’t dwell on this further), but in general it had a very difficult time following simple rules. At one point I asked it to build a Python code representing my rules, and it actually did succeed in writing me something that was (almost but not quite) completely correct. The problem this time was that it also gave me example outputs from the code it had just written to demonstrate how it would work, and every single example presented an incorrect answer (though to be clear, if I actually had run the code myself it would have returned the correct answer).

The relevance of these examples is that LLMs struggle to follow and apply even the most clearly-defined rules. They cannot reason from basic propositions, so if encountering a rule stating “if A and B, then C”, the LLM will not necessarily produce “C” when “A and B” are true. This is precisely the form of reasoning that is frequently embedded in complex texts of the sort we are discussing.

Conclusion

To summarize, LLMs are hopelessly ill-suited to the task of navigating complex text. They are resistant to staying within the bounds of any text you provide it with and prone to hallucinating the specifics of any text you may ask it about. They are incapable of the sort of reasoning necessary to negotiate the logical structures typically embedded in complex text. Given the precise organization and specification characteristic of the sorts of documents we are discussing, machine navigation of them should be possible (one can imagine the contents of a conditional clause readily translated into code), but LLMs are decidedly not the appropriate tool to do so.

Comments

Post a Comment