Expanding the Vocabulary of a Semantic AI System

We now have

a 45,000 word vocabulary, and 10,000 wordgroups, but it is still not uncommon

to encounter ten or so new words when reading a piece of text (a column from

the NYT, or a new technical breakthrough – not a new technical area, these are

handled differently). A process running in the background detects the unknown

word, and searches several dictionaries for it. Once found, a file is created

to house the definitions, the appropriate structure is built into the network,

and reading of the text continues. The file is later manually checked for

accuracy and completeness. That is not enough – in a period of rapid change,

words can acquire new definitions, or have their existing definitions radically

changed (in 2025, tariffs as an example). Currently, change of meaning is handled

manually, as waiting on change from the dictionary-makers would be far too

slow. For legislation, which can have a long life, it may be necessary to carry

the old definitions that were in use when the legislation was created.

This

seems a lot of work – is it needed?

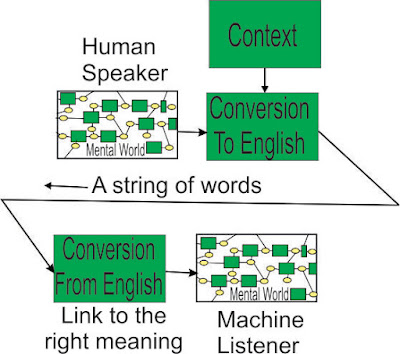

A lot of

effort is needed to use the meanings of words – most of that effort goes

unnoticed because humans have no choice but to do it unconsciously, given the

severe limit on their input (the Four

Pieces Limit). If the effort is being made by the machine without a limit,

it seems reasonable to use the right meanings.

Relevance: A way to stop the skulduggery

behind Robodebt (2 suicides, hundreds of thousands traumatised), (British Post Office) Horizon (four suicides, thousands deeply scarred),

and Boeing 737 MAX MCAS (346 dead, a reputation trashed).

Comments

Post a Comment