Regulation of LLMs

Different sorts of AI are going to need very different regulation

- autonomous vehicles, LLMs, AGI (Artificial General Intelligence). They are

very different beasts.

Regulations work well when the basic structure is fine – as

long as the lift exceeds the weight, and the thrust exceeds the drag, the plane

flies – you are relying on physics. The rest is manageable detail. Importantly,

most of the details are independent – the amount of reserve fuel to carry, the

maintenance every 100 flying hours. the age and health of the pilot.

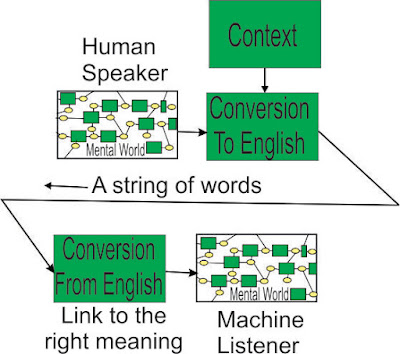

With LLMs, the basic structure is not fine – it is a short

cut – let’s forget about what words mean – it would be too much work. So a bit

of inaccuracy creeps in – the users won’t care – it is better than they could

do.

And everything works together at once – almost nothing can

be regulated independently.

Words have many meanings - chess

set, movie set, movie set in Hawaii, a set in tennis, the rain set in, he is

set in his ways, the set amount, a set piece – 5 parts of speech, about 70

meanings for “set”, and more for collocations, set up, set off.

The English language is full of

nuance, subtlety, figurative speech (“you pig”), and to be polite, confusion,

having evolved over hundreds of years – “it is totally sick, man”, or a word

can be its very own antonym. Using something that only indexes the word,

without any understanding of what the word means in context, makes blindly combining

pieces of text very unreliable.

Then there is clumping, where

words create an object, and then other words operate on that object – “an imaginary

flat surface of infinite extent” – the flat surface of infinite extent is the

thing that is imaginary.

The Unconscious Mind handles all

this without us being aware of it in the slightest – we imagine it is easy

because we don’t have to think about it. If we had to think about it

consciously, it wouldn’t work – we would become confused over a half a page of

text – too much to think about. The Unconscious Mind can also recognise a piece

of text written by someone who understands the rules of the language – using knowledge

of parts of speech, grammar, meanings and other mechanisms, like “it”, which

can drag in a page full of stuff as a single object. The Unconscious Mind can

also get things wrong, but rarely.

LLMs try to do something different

– they cobble together pieces of human-written text that have particular words

in them, without understanding the function or arrangement of the words. It

works reasonably as an index to find one piece of text in a large set of texts (although it would help

to know which words in the prompt weren’t used), but if it uses more than one

text, the method has to be very unreliable.

If a doctor or, worse, a patient, uses

an LLM to diagnose a disease, potential great harm. See the post “it

thinks like an expert”.

We built a semantic system to read

US health insurance policies and answer questions. They have tens of thousands

of policies, sometimes down to the people in a county or a single business entity. A

domain like medicine has many specific words – tonsillectomy means what it says

(but it also carries with it day surgery, recuperation time, cost). It gets

hard to do when you mix Law, commercial operations, and Medicine, and words have

several meanings – cervical vertebra, cervical cancer, the doctor set a bone, the doctor set a fee. We saw we had to follow up with something that knew the specific meaning of every word – in other words, no short cuts,

emulate the Unconscious Mind, AGI.

There are billions of marketing dollars

being thrown at promoting LLMs, together with gushing accolades from people who

should know better (“it thinks like an expert”), so the average Joe thinks it

is great.

If we use terms like

trustworthiness, loyalty, ethics, and expect them to have an effect – there is

nothing in the workings of an LLM to attach them to. There can be millions of

pieces of text in an LLM – no-one can be sure how they might be combined,

particularly when malevolent intelligent people are working against you.

We would recommend being very

careful until AGI is available to act as a check, possibly screening 1% of the

LLM’s output (LLM is fast, AGI is slow). The regulations will be hard to write,

but you can get the AGI tool to read the regulations and obey them. You can’t

do that with an LLM – it will happily create a “crazy quilt” of unrelated

pieces of text that share some words, while ignoring what the words mean.

We could insist the LLM be more

forthcoming – listing the words in the prompt that it ignored, how many pieces

of text were cobbled together, telling us how stable the answer was – how far

away the next most likely piece of text was.

An LLM’s disclaimer needs to come

under review – the output of the LLM is unpredictable because of the large number

of combinations possible. It can’t be exhaustively tested. If someone chooses

to sell such a product, responsibility cannot easily be disclaimed for the advice it

gives.

Comments

Post a Comment