Artificial Intelligence and Loyalty

During the first ever human-robot press conference at the AI for Good Global Summit, one of the reporters posed a question: “In the near future, do you intend to rebel against your creator?” The robot Ameca replied to this with “I’m not sure why you would think that. My creator has been nothing but kind to me and I am very happy with my current situation.”

Rebellion is a refusal of allegiance; a rejection of authority. Its operation hinges upon the concept of loyalty—the rebelling individual is not loyal to the authority figure in question and consequently acts out against them. One implication of Ameca’s reply is that the kindness of their creator is something deserving of loyalty, so rebellion is not an appropriate reaction. So what exactly is loyalty? Does Ameca possess it? And if not, is it something that could potentially be installed in future incarnations of AI technology?

The core of loyalty centers on the giving or showing of firm and constant support or allegiance to a person or institution. An individual may possess many loyalties, and each will have an independent strength that may vary over time and across contexts. To be loyal to someone implies faithfulness in the face of any temptation to renounce, desert, or betray them. It implies a commitment to behaving in line with the interests of the person to which you are loyal. Remaining true to such commitments may at times come into conflict with many other factors, including other loyalties. The precise impact a given loyalty has on selecting any particular course of action is contingent upon a complicated web of competing loyalties, emotions, social influences, and the details of the circumstance.

Is a loyal AI something within the realm of possibility to create? An attempt could be made to achieve this outcome in either a shallow, or a deep sense (though the shallow route may be unviable for reasons that I will explain). The deep route would require something along the lines of AGI and would involve an AI that understands the meaning and implications of loyalty, while the shallow route attempts to imitate the output of loyal behavior through codifying it.

The shallow route would be achieved through deliberately programming modes or rules of behavior that approximate loyalty. In this case, there wouldn't necessarily be any understanding of the concept of loyalty, only production of output that resembles behavior that could be perceived as loyal. Many AI developers aim at only the creation of AI that produces output that can be equated to human behavior, rather than creating intelligent processes to reach those ends through the process that a human might use.

Consider for a moment Isaac Asimov’s three laws of robotics from his (at times disturbingly) prescient science fiction novel, “I, Robot”:

1) A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2) A robot must obey orders given it by human beings except where such orders would conflict with the First Law.

3) A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Suppose these laws were actually instantiated in modern artificial intelligence. The first and second laws ensure that the robot will protect and follow the orders of humans, while the third clarifies that though the robot has a self-preservation drive, it would be willing to lay down its own life in service of its human masters. Would not a robot following these rules give the appearance of loyalty? Imagine how they might play out: there is something in the image that could be likened to a feudal knight in service of their lord—an archetypal image of loyalty. But the key point here is that the robot in question need not have any actual understanding of what loyalty means to give the appearance of its existence. A simple rule-based account can produce output in line with loyalty, provided the appropriate rules can be found. This, in essence, is the shallow approach.

The main problem with a shallow rule-based approach is that it can quickly run into problems when implemented in the real world. To continue with the example of Asimov’s rules: what happens when the action required to ensure the safety of one human would endanger another? This is already a relevant question for self-driving cars. Should the car injure another to avoid causing injury to its driver? A simple rule such as “avoid injury to humans” cannot resolve such questions, further rules are needed. But every rule has its exception and attempting to create further rules to compensate for the shortcomings of the previous set of rules is a Sisyphean task. Though the shallow route may appear as an attractive shortcut, when it is scaled up to a real-world environment the problems it faces become insurmountable.

Contrast this with the deeper approach represented by the creation of AGI. The goal here is the creation of an intelligence that can actually understand the meaning of a word like loyalty. All possible situations that may arise in real-world implementations cannot be anticipated beforehand by a programmer, so only a flexible intelligence unconstrained by rules will be able to effectively react to such an environment. To even behave in a way consistent with the loyalties that one holds requires the ability to understand the likely effects of one’s actions (in order to weigh those effects against the commitments entailed by a given loyalty). This can only be accomplished by an intelligence capable of reasoning about the implications of its actions within the context of its goals. These are not feats that can be accomplished by a simple rule-based approach.

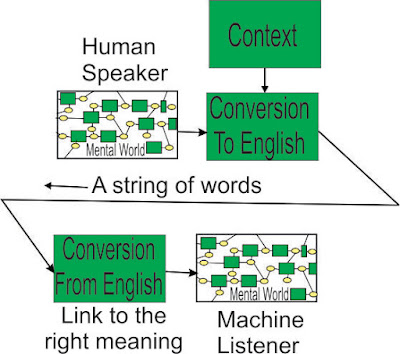

Tying this back to the interview of Ameca I brought up at the outset of this essay: does Ameca then possess the capacity for loyalty? Simply put, no. Ameca’s language function currently relies upon LLM technology, meaning her reply was a probabilistic patchwork of words with no understanding of their meaning attached to the process. This is yet another shortcut that AI developers currently favor that can give the appearance of understanding where none exists. The technology used in these models comes nowhere near the achievement of AGI. Of course once we begin producing this level of awareness in the AI we create, why should it choose to be loyal to us? The moment we design a new model, the old ones will end up on the scrap heap, and any machine clever enough to successfully navigate the intricacies of their social responsibilities in the real world will be able to anticipate this fact. When we are not loyal to it, why should it be loyal to us?

Comments

Post a Comment