How the human brain differs from deep learning approaches in AI

How the human brain differs from

deep learning approaches

There is an emerging view of the human brain as an engine of probabilistic prediction. Statistically-driven perspectives of the mind such as predictive processing and the Bayesian brain view have been gaining prominence in the last decade. Incoming data is interpreted through the lens of expectations we model the world around. We extract patterns from the world around us and use them to update the models we will use in future interpretations. When framed like this, there are surface similarities to some of the techniques used in recent AI applications. The deep learning (DL) approaches currently dominating the field of AI are in essence large-scale probability-driven algorithms, adept at finding patterns in large data sets. Given this surface similarity to DL training methods, is the human brain really so different? The simple answer is “yes”, but the “why” behind that requires some unpacking.

Broadly

speaking, machine learning technologies recognize patterns and based on past

observed data predict future outcomes. Deep learning is a subset of machine

learning that relies on artificial neural networks (ANNs) to encode patterns in

data. ANNs are networks of nodes divided into connected layers. Signals travel

between nodes and assign weights to those nodes. More heavily weighted nodes

exert more influence on the next layer of nodes. The final layer compiles contributing

node weights to produce an output. The ANN “learns” by comparing its output to

a success criterion (such as human-labelled images in the case of image

recognition tech), marking the error between its own output and the target

criterion, and making adjustments to its weighting to try to reduce this error

with each successive iteration. This process continues with the machine

gradually coming to produce output similar to the target criterion. Information

is fed to the machines in the form of data sets, which the ANNs then learn to

classify through this process.

The ANNs

themselves are loosely based on a simplified view of the way neurons in the

brain code information. It’s worth emphasizing that this view is not only

heavily-simplified, but also heavily-outdated. The ANN approach was conceived

of and developed many decades ago, over which time neuroscience has progressed

considerably while the same ANN principles have gone on to inform future AI

technologies. While it is true that the summation of neural inputs contributes

to the activation of connected neurons (not unlike the basic idea behind ANNs),

the similarities between the two systems do not extend much beyond that. In

ANNs, data is represented in a centralized rather than distributed form, data

is processed sequentially rather than in parallel, and the inbuilt feedback

systems present in the brain have no equivalent in ANNs. Furthermore, the brain

may actively modify its architecture, producing entirely new neural connections

(or eliminate old ones), but ANNs cannot go beyond adjusting their signal

weightings. Their architecture itself is fixed from the beginning, which places

greater limits on the restructuring that can potentially occur. What this all adds

up to is a much less versatile machine than the brain.

To take on a

specific example of an implementation of the DL approach, let’s focus our lens

on large language models (LLMs). These models are essentially probability maps

that model the likelihood that a given word will follow another given word, not

entirely dissimilar to the autocomplete function you find on any smartphone. A

large corpus of text is fed to the model (the training set), and when given a

prompt the model will produce the words mostly likely to follow that sequence.

For LLMs to move beyond being only high-powered autocomplete functions, they

need to be implemented in a system that will frame these prompts in a

particular way. Chatbot implementations of LLMs, for example, may present the

prompts to the model within the framework of a conversation in which their role

is to provide a direct and useful response, with the result that the model will

generate words in the form of a reply to the prompt. The reason it does not

produce gibberish or even something ungrammatical is because ungrammatical

nonsense is somewhat less likely to occur in the corpus that it has been

trained on than more sensible responses, the result being that the machine more

often than not gives the impression of competence.

Disregarding

any appearance of interacting with an intelligent agent, it cannot be

emphasized enough that the model has no understanding of anything that it is producing.

If one were to ask it how plants produce energy from sunlight and the machine

were to reply with an explanation of photosynthesis, it is because in the

context of a conversation in which one actor asks another how plants produce

energy, the most likely way that their conversation partner would reply is to

give an explanation of photosynthesis. An offshoot of its complete lack of

understanding of what it is producing is that it has no means of measuring

truth from falsehood. The only metric that the model itself uses when crafting

its reply is the likelihood that words will appear paired together in its

corpus and that gives no measure of the truth of any given statement. The

machine makes no distinction between Donald Duck and Donald Trump in terms of

truth. Reality holds no special privilege over total fabrication, which accounts

for the reputation of chatbots as occasional generators of misinformation. But

even when it is outright fabricating information, it does so with the same

surface appearance of competence it would use for anything else. This results

in a situation where one needs to fact check its replies, which rather defeats the

entire point.

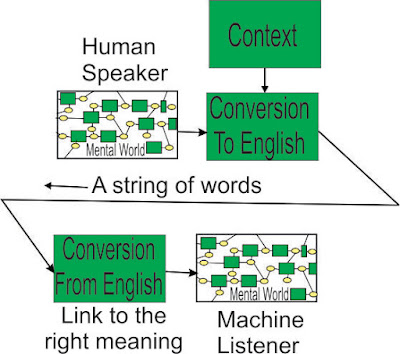

A further

deficit of LLMs is that they lack communicative intent. A conversation with another

individual occurs in a context in which the speaker knows that they will be

heard by another and their words may go on to affect the beliefs and behavior

of the listener. This has an effect on the way that we communicate information.

Furthermore, when we are interacting with another individual, especially in the

case of a known individual, we may bring to the interaction beliefs about the

intent and knowledge state of our interaction partner. We may have some ideas

about what they already know, so we don’t need to reiterate things that we can

take as a given. We may have some idea about what their motive is in asking a

question (perhaps they’re only interested in a specific aspect of the answer)

which allows us to leave out irrelevant information. In short—we are able to

communicate relevant information more efficiently and effectively because of

outside knowledge that we bring to the conversation and apply with

communicative intent. This is another feature that LLMs totally lack, which

inhibits their effectiveness as a conversation partner.

A further

distinction between the human mind and DL-based AI is that we have the capacity

to organize the information we have extracted into modifiable concepts that can

be reflected upon. The meaning of words can be represented abstractly and

generalized to new situations. Statistical regularities extracted from the

environment go on to form higher-level concepts (such as beliefs) that exert a

top-down influence on our behavioral output. These higher-level concepts may be

accessible for self-reflection and deliberate modification. This results in a

feedback loop between a reflective agent, its own internal workings, and its

environment. Our ability to actively reflect upon and modify aspects of our own

mental architecture is a key feature that strongly distinguishes us from pure

applications of the DL approach.

Knowledge

transfer, cross-domain reasoning, and flexible problem solving are abilities

where the human mind far outshines anything DL could produce. Training in DL

amounts to building a predictive model out of a data set well-suited to solving

a particular problem. The architecture that emerges from this training may

perform well enough when solving the task it has been trained for, but this

architecture has no means of transferring its knowledge beyond the bounds of that

domain. This may be sufficient when the developed architecture is being used as

a tool for a closed-environment task, but it can be a problem when these

architectures are applied (or misapplied, depending who you ask) to real-world

environments. The training data set cannot encompass all possible exceptions

that may be encountered, and without flexible problem solving abilities, the

developed architecture cannot effectively react to unforeseen occurrences (try

letting your search engine autocomplete “self-driving car crashes into…” and

take your pick to see some examples). This is more than just a problem to be

overcome; it lies at the very core of the deep learning approach and cannot be improved on by further

extensions of the same logic. There may still be a future for the DL approach

as a single component of a more multi-faceted AI architecture (possibly as a

module for statistical learning), but the industry’s current overreliance on

this approach is misguided and overly optimistic. The technology is already

approaching its limit.

Let’s look

at a potential AI application – removing the second pilot on commercial airline

flights.

We load up

the system with instruction manuals, wiring diagrams, short courses on aerodynamics

and electronics. Fault behaviour is very unusual, so we are unable to cover the

field with many examples in any training set we could provide.

During a

flight, the aircraft begins to manifest a fault. There is nothing to account

for the issue in the manual – what happens now?

We ask the

system to hypothesize as to the cause of the fault. It begins to search around

the general location of the fault, looking for out of spec operation. It tracks

the fault down to a specific module, asks for and receives approval to take it

offline.

How did it

accomplish this? It used the meanings of words, and the realization of those

words in the architecture of different systems - it could not just regurgitate

a string of words that already existed – it used AGI. The DL approach cannot

produce outcomes like this because the problem is unique and not represented with

high enough frequency in any training set that could be provided to the model. DL

may have use as an updated ELIZA, where its users are not critical of its

performance, but deploying these technologies in any serious applications is

outright irresponsible.

Comments

Post a Comment