ChatGPT Critique

OpenAI has recently made its ChatGPT available to the general public and the response has varied from wild enthusiasm to harsh critique. ChatGPT is able to formulate responses to questions and requests in natural language, occasionally giving the impression of genuine intelligence. But how impressive is this technology really? ChatGPT is an LLM trained by extracting word-sequence patterns from a large text-based data set. Using the probabilities it has derived from analyzing the patterns in its training data set, it generates text by predicting the next word that best fits the sequence it is putting together.

One problem with ChatGPT is its lack of attention to ongoing developments in the conversation you are having with it. It is possible to actively direct its attention to a previous statement it has made, but its default approach is to disregard the previous conversation and address every new question independently. This is well illustrated in the following Twitter conversation during which a man painstakingly attempts to get ChatGPT to admit that a sailfish is not a mammal: https://twitter.com/itstimconnors/status/1599544717943123969. The machine repeatedly ignores points that it has itself just made, and even after goading an admission of error out of it, it still defaults to the same false answer when the original prompt is once again given.

Aside from this, its mathematical abilities leave something to be desired, to say the least. It performs fine on basic single-step arithmetical operations, but once you've added more than a few integers it quickly becomes prone to error. This holds even for rather simple questions that the average human can correctly calculate in their head. Complex problems requiring inference or involving text-embedded information will more often than not fail to meet human levels of performance. Arguing about mathematics with ChatGPT can quickly become reminiscent of the 2 + 2 = 5 passage in Orwell's 1984.

This brings us to our next point against ChatGPT: in its current form it has the unfortunate tendency to occasionally outright fabricate information, presenting it in a plausible-seeming form that could readily fool anyone not motivated to fact check it using external sources. If put under pressure in these situations, the machine will eventually admit its error and backtrack, but it may take some heavy-handed convincing to get it to this admission. We have a word for people who behave like this in life: bullshitters. In all fairness, OpenAI does provide a disclaimer cautioning against relying upon the machine as an authoritative source of information. Nevertheless, this technology can all too easily lead to the spread of misinformation.

An even larger problem emerges when unreliable automation technology like this is given real-world decision-making power (and it already has been, and likely will be again in the future). Take the recent Australian Robodebt fiasco as an example: execution of a government debt retrieval program was left up to an automated system. Though the Social Services Act relied upon is quite clear that repayment amounts should be averaged over a fortnight, the machine used a method of averaging that did not take into account the wide variance in fortnightly income of welfare recipients and mistakenly sent out hundreds of thousands of false debt notices. The result of this program (that was intended to save the government money) was hundreds of millions of dollars in government losses in the form of repayments owed to citizens given false debt notices. And that's not even mentioning the psychological damage inflicted to the (often impoverished) citizens receiving the false debt notices (several suicides), the debt-collectors pounding on their door, and the subsequent class-action lawsuit.

Overreliance on these technologies in their current form is both irresponsible and societally damaging. Much more dependable technology is needed before we can even think about applying it to something as impactful as implementing government policy. Only a machine with full-text comprehension can deal with the level of textual complexity involved and avoid the errors that resulted Robodebt.

Tying this point to ChatGPT, perhaps the most damning of the machine's shortcomings is its complete lack of comprehension with regard to the text it is generating. As ChatGPT itself will readily admit when asked to provide a critique of its own shortcomings: "One of the main limitations of ChatGPT is its inability to truly understand or comprehend the meaning of the words it is using or the ideas being expressed. While it can generate responses that are appropriate for a given context, it does not have the ability to truly grasp the underlying concepts or ideas being discussed. This limits the types of tasks that the model can effectively perform, and raises questions about its suitability for certain applications."

The importance of this point cannot be understated. One might ask it to compose an essay or a sonnet, and more often than not it will produce something with at least the surface appearance of competence, but what is actually going on behind the scenes here? It's doing little more than reshuffling scraped content into a requested stylistic formula. In other words: Wikipedia articles forced into cookie-cutter writing templates. Intelligent engagement with the information it is manipulating is far beyond its capacities. Incremental improvements of this system will likely continue in the coming years, but this fundamental shortcoming will never be overcome using the current methods.

So how much of a problem is this? It places a limit on the quality of the output it is capable of producing that no amount of improvement along the line will allow it to surpass. Its data summarization and content generation potentialities have some genuine applications, but as a step towards authentic AGI, the enthusiasm for this technology is entirely misplaced. It may have some use as a labour-reducing technology, but it will never go beyond what humans can already do. A priority of AI-research should be helping to overcome human limitations such as the Four Pieces Limit, not simply creating a more autonomous system with even more constrained limits.

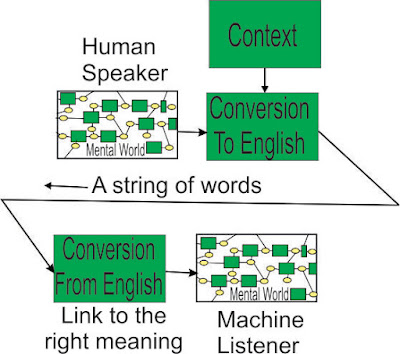

A different approach is needed if we are

ever to overcome the restrictive capacities of LLM. We are currently developing

Active Structure, an entirely new approach to teaching a machine language using

a self-extensible, undirected network of semantic structures. Through mapping

the relations between words, meaning itself begins to emerge. This is one point

where our approach distinguishes itself from the prevalent LLM-based methods:

our approach actually has the potential to understand not only the question it is being asked, but the response it puts

together. Our approach focuses on understanding the workings of the unconscious

mind and emulating its process of language comprehension in the form of a

machine. Our goal is to develop a machine with the comprehension abilities of a

human combined with the processing power of a computer. In this, we hope to

overcome the failings of currently prevalent machine learning approaches while

surpassing the processing limitations of the human mind.

- Ryan Sigmundson

Comments

Post a Comment